FPGA-Powered Computer Vision: Building Ultra-Low-Latency Vision Systems

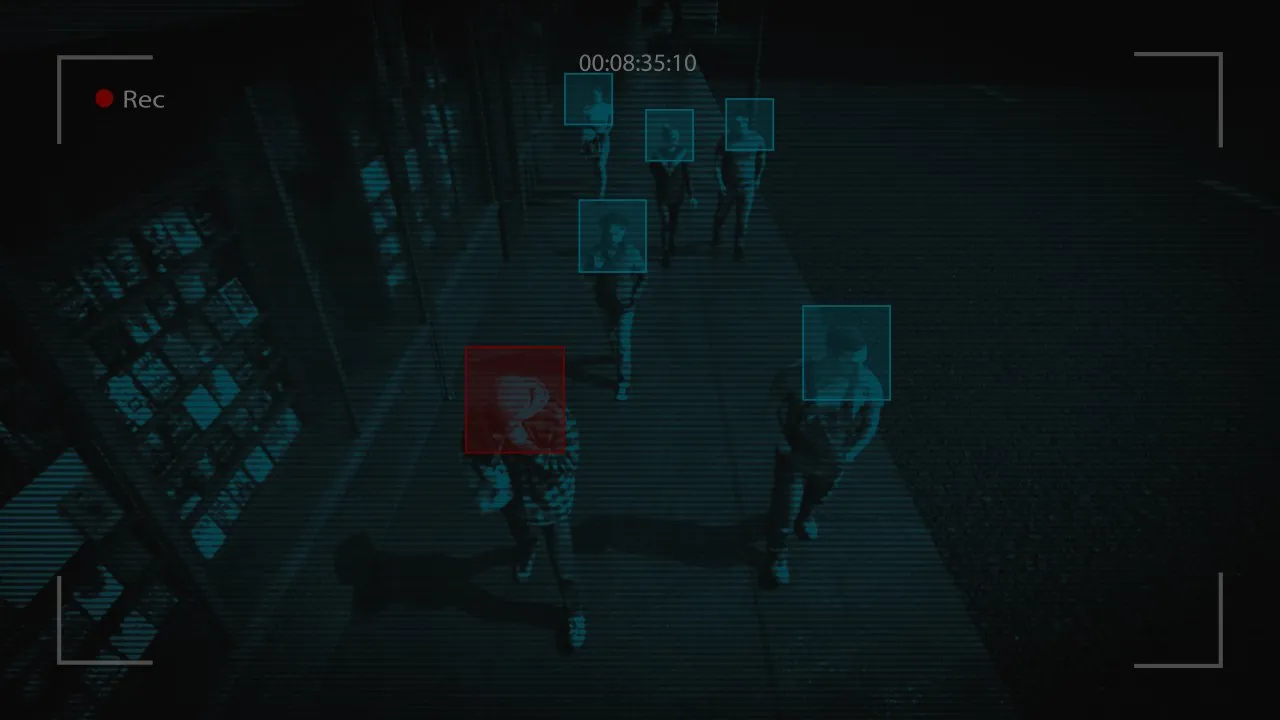

Computer vision has become a cornerstone of modern technology, enabling machines to perceive and interpret the visual world. From autonomous vehicles and robotics to industrial inspection and smart surveillance, vision systems increasingly operate in environments where milliseconds, or even microseconds, matter. In these scenarios, Field‑Programmable Gate Arrays (FPGAs) have emerged as a powerful platform for building ultra‑low‑latency computer vision systems that traditional processors struggle to match.

Why Latency Matters in Computer Vision

Latency is the time between capturing an image and producing a usable result, such as detecting an object or triggering a control action. In many applications, high throughput alone is not enough. Systems must respond predictably and immediately.

Consider an autonomous drone avoiding obstacles, a robotic arm aligning parts on an assembly line, or a driver‑assistance system detecting pedestrians. Even small delays can lead to degraded performance or safety risks. While CPUs and GPUs can process vision workloads quickly on average, they often introduce variable delays due to operating systems, memory hierarchies, and task scheduling. FPGAs are designed to eliminate much of this uncertainty.

How FPGAs Differ from CPUs and GPUs

FPGAs are fundamentally different from software‑driven processors. Instead of executing instructions sequentially, FPGAs allow developers to configure custom hardware pipelines that process data as it flows through the device.

In a vision pipeline, this means that image capture, filtering, feature extraction, and decision logic can all operate simultaneously in hardware. Each stage processes data every clock cycle, producing consistent, deterministic latency.

CPUs excel at flexibility and control logic but are limited by instruction execution and context switching. GPUs deliver massive parallelism but rely on batching and memory transfers that introduce latency. FPGAs sit in a unique position: they offer fine‑grained parallelism with cycle‑accurate timing, making them ideal for real‑time vision tasks.

Streaming Architectures for Vision Pipelines

One of the biggest advantages of FPGAs in computer vision is their ability to implement streaming architectures. Instead of waiting for an entire image frame to be stored in memory, an FPGA can begin processing pixels as soon as they arrive from a camera sensor.

For example, an FPGA‑based system can:

- Apply image filtering while pixels are still being captured

- Perform edge detection line by line

- Extract features without buffering full frames

- Trigger actions before the image capture is complete

This approach dramatically reduces end‑to‑end latency. In many cases, FPGA vision systems operate with latencies measured in microseconds, far below what CPU‑ or GPU‑based systems typically achieve.

Determinism and Real‑Time Guarantees

Ultra‑low latency is only valuable if it is predictable. FPGAs provide deterministic timing because the hardware logic executes in a fixed number of clock cycles. There are no cache misses, no operating system interrupts, and no background tasks competing for resources.

This determinism is crucial in applications such as:

- Autonomous driving and advanced driver‑assistance systems (ADAS)

- Industrial robotics and motion control

- Medical imaging and surgical guidance

- Aerospace navigation and targeting systems

In these environments, worst‑case latency matters more than average performance, and FPGAs are uniquely suited to meet strict real‑time constraints.

Power Efficiency at the Edge

Many vision systems operate at the edge, where power, thermal limits, and physical space are constrained. GPUs, while powerful, often consume significant power and require active cooling. CPUs running complex vision software can also be inefficient for continuous workloads.

FPGAs offer excellent performance per watt because they implement only the logic required for a given task. There is no instruction overhead or unused circuitry consuming energy. This makes FPGA‑powered vision systems especially attractive for embedded and mobile platforms such as drones, smart cameras, and industrial sensors.

Development Challenges and Tooling Advances

Despite their advantages, FPGAs are not without challenges. Traditionally, FPGA development required hardware description languages (HDLs) such as VHDL or Verilog, which demand specialized skills and longer development cycles.

However, the ecosystem has evolved rapidly. High‑level synthesis (HLS) tools now allow developers to describe vision algorithms in C, C++, or OpenCL and compile them into hardware. Vision‑specific libraries, pre‑built IP cores, and camera interfaces further reduce development time.

While FPGA development still requires careful attention to timing, data flow, and resource usage, the barrier to entry is much lower than it was a decade ago.

FPGA Vision vs GPU Vision

The choice between FPGA and GPU often depends on the application’s priorities. GPUs are well-suited for:

- Complex vision models with frequent updates

- Deep learning inference with large neural networks

- Environments where latency requirements are relaxed

FPGAs excel when:

- Latency must be minimal and deterministic

- Power efficiency is critical

- Vision pipelines are stable and well‑defined

- Processing must occur close to the sensor

As a result, many modern systems adopt hybrid designs, using GPUs for training and high‑level perception while deploying FPGAs for real‑time, safety‑critical vision tasks.

The Future of FPGA‑Powered Vision

As vision systems become more integrated into physical systems, the demand for ultra-low-latency processing will continue to grow. FPGAs are increasingly combined with system-on-chip (SoC) designs that integrate CPUs, FPGA fabric, and AI accelerators on a single device, offering the best of all worlds.

These platforms enable developers to place time‑critical vision processing in FPGA logic, while higher‑level decision‑making runs on embedded CPUs. The result is a tightly integrated, efficient, and responsive vision system.

Final Thoughts

FPGA-powered computer vision represents a shift from software‑centric processing to hardware-optimized perception. By exploiting streaming architectures, true parallelism, and deterministic timing, FPGAs enable vision systems that respond faster and more predictably than traditional approaches.

While they may not replace CPUs or GPUs in every scenario, FPGAs play a crucial role wherever ultra‑low latency, reliability, and efficiency are paramount. As tools improve and applications demand ever‑faster reactions, FPGA-based vision systems are poised to become a foundational technology for real‑time intelligent machines.