Fall Detection and Abnormal Posture Recognition in Security Video Systems

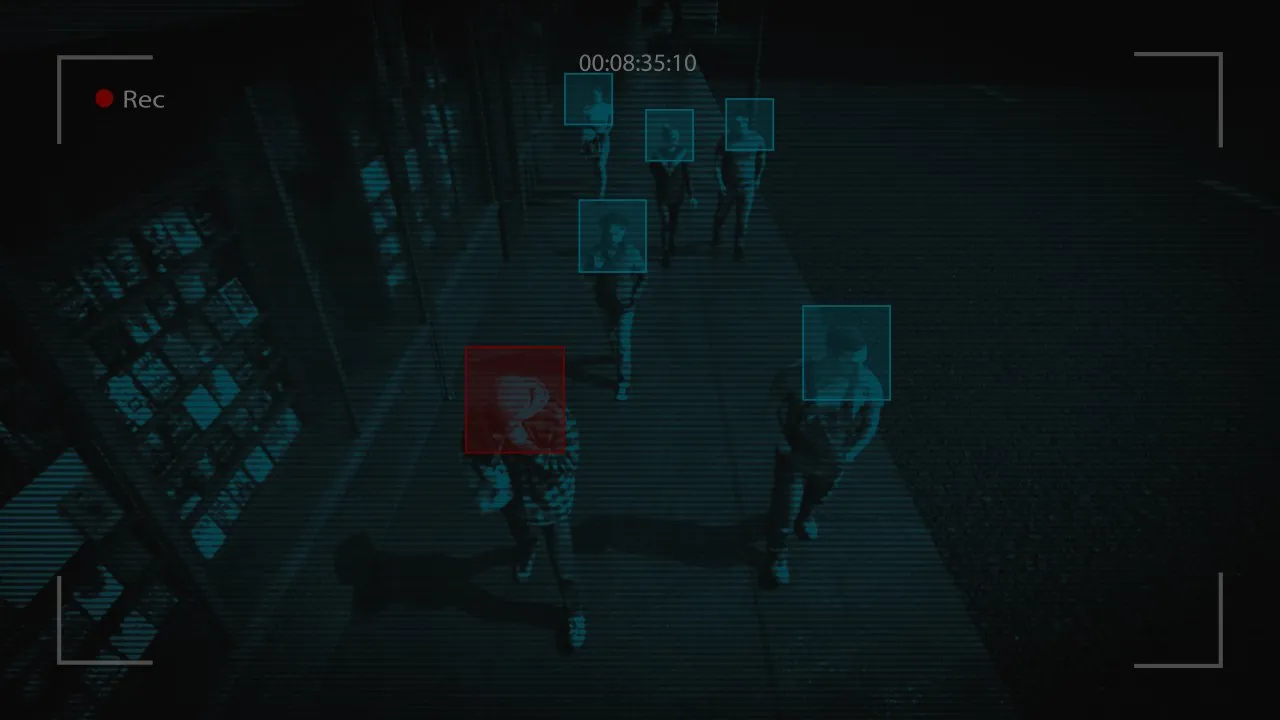

Every stumble, every unexpected collapse, every moment someone needs help. These critical events unfold in seconds. In hospitals, nursing homes, warehouses, and public spaces, the difference between a quick response and a delayed one can mean everything. Traditional security cameras record everything, but they don’t understand what they’re seeing. Artificial intelligence has changed that, turning passive surveillance into active guardianship.

Let’s explore how AI-powered fall detection evolved, how these systems recognize danger today, and where this technology is headed.

From Pixel Grids to Human Skeletons

The first challenge in any posture recognition system is getting from raw video frames to something semantically meaningful. Raw pixels are noise-heavy and viewpoint-dependent. A person lying on the floor looks completely different depending on the camera angle, lighting, and occlusion. The solution the field has largely converged on is human pose estimation: extracting a skeletal representation of the body, typically as a set of keypoints (joints such as shoulders, hips, knees, and ankles) connected by limbs.

Models like OpenPose, MediaPipe, and more recent transformer-based architectures such as ViTPose have made real-time, multi-person keypoint detection not just feasible but deployable on modest hardware. Once you have a skeleton, you have a representation that is far more robust to clothing variations, lighting changes, and moderate occlusion than raw image features.

But a skeleton at a single moment in time tells you only so much. A person lying on the ground might be doing yoga, taking a nap, or having a cardiac event. The difference is context, which means you need to reason over sequences of frames, not individual snapshots. This is where temporal modeling enters the picture.

The Temporal Dimension: Understanding Motion, Not Just Position

A fall is not a posture. It is a transition. The defining characteristic is rapid, uncontrolled downward movement followed by sustained horizontal body position. Any effective fall detection system needs to capture that dynamics: the velocity of joint movement, the trajectory of the center of mass, and the absence of recovery motion afterward.

Early rule-based approaches tried to capture this with hard thresholds. If the bounding box height-to-width ratio drops below a certain value within N frames, flag a fall. These systems are brittle. They fail on people who bend down slowly, on camera angles that don’t produce clear silhouette changes, and on environments with cluttered backgrounds.

More robust solutions use recurrent architectures, LSTMs and GRUs, or, increasingly, graph neural networks (GNNs) that model the human skeleton as a graph and propagate information along anatomical connections over time. The graph formulation is particularly elegant: it captures the fact that the relationship between, say, a hip and a knee is structurally meaningful in a way that a flat feature vector cannot easily represent. Spatial-Temporal Graph Convolutional Networks (ST-GCN) have become a dominant architecture for skeleton-based action recognition precisely because they encode this structure explicitly.

Toward Multi-Modal Understanding and Real-World Deployment

Skeleton-based detection provides the foundation for contemporary fall detection systems. However, to ensure accurate results, situational awareness must be achieved. While cameras may fail to capture certain angles, they may also fail in situations with poor lighting, in crowded spaces, and in situations with obstructed views. This is where multi-modal sensing comes in, with cameras, depth sensors, accelerometers, infrared sensors, and radar sensors providing additional information for better situational awareness. The sudden acceleration of a wearable device and the collapse detection of a camera cross-verify each other, eliminating false positives.

This is made possible through edge computing. For instance, smart cameras and other devices have lightweight models installed in them. This helps in processing the video in real-time and sending alerts in milliseconds without requiring cloud dependency. This reduces latency and increases privacy. This can be applied in hospitals and homes where the video remains within the location.

This can be taken to the next level with federated learning. In this case, the systems get better without sharing sensitive video content. For example, better detection of gait and posture in one facility can benefit thousands of people elsewhere, sharpening the detection of subtle dangers. The perceptive AI layers, combined with smart IoT devices, transform surveillance systems into adaptive safety systems, detecting falls before they occur.

Integration with Smart Environments and IoT

Fall detection doesn’t work in isolation anymore. The AI systems now connect with smart sensors, wearable devices, and building management systems. When a camera detects a fall, it can automatically trigger other responses.

Nearby smart speakers might call out asking if help is needed. Door locks could automatically open for emergency responders. The system might even pull up the person’s medical records and location data, giving first responders everything they need before they arrive.

In industrial settings, fall detection integrates with worker safety systems. If someone falls from a height or gets knocked down by equipment, the AI can halt machinery, alert supervisors, and guide rescue teams to the exact location. All of this happens faster than any human-only system could manage.

Final Thoughts

AI-powered fall detection keeps improving. Future systems will recognize even more subtle warning signs, changes in gait that suggest balance problems, patterns of movement indicating cognitive decline, or signs of medical episodes before they cause falls. The goal isn’t just responding to emergencies, but preventing them entirely.

From passive recording to active protection, security cameras have become guardians. And in those crucial moments when someone needs help, every second of faster response counts.